GOOGLE ADS · CLAUDE-FIRST

Best Claude MCP for Google Ads — 2026 Rankings

Claude Desktop runs every MCP via the same protocol — but a few of them feel built for Claude, and most don’t. The difference shows up in how well-named the exposed tools are, whether responses render as Claude artifacts, and whether prompt templates load automatically. We ranked 6 MCPs strictly by Claude integration depth. Ryze AI takes #1 at 4.9/5 with 38 tools and 99.7% measured uptime; Pulselane is the workflow-builder runner-up.

Contents

Best Claude integration

38 tools. Artifact responses. 99.7% uptime.

- ✓38 well-named Claude tools

- ✓Markdown / JSON artifact output

- ✓Prompt templates auto-load in Claude

What makes a Claude-friendly MCP

The Model Context Protocol is platform-neutral, so technically any MCP works with any LLM client. In practice, MCPs are not equally good with Claude. Some expose generic JSON tool surfaces that Claude can technically call but doesn’t reach for naturally. Others lean into Claude-specific patterns: well-named tools that match Claude’s tool-selection bias, response shapes that render as artifacts, prompt templates that pre-populate when Claude sees the MCP. The difference between “works with Claude” and “built for Claude” is significant.

For Google Ads specifically, this matters because campaign analysis is dense data work. You want Claude to render a top-keywords table inline rather than dumping raw JSON, to suggest follow-up actions Claude knows it can execute, and to remember context across a multi-turn audit. MCPs designed without Claude in mind miss most of those affordances.

For the deeper review-style write-up of these same servers see Best Claude MCP Servers for Google Ads — Reviewed for 2026. For the broader 7-MCP comparison framed as “best MCP for Google Ads” rather than Claude-specific, see Best MCP for Google Ads in 2026.

1,000+ Marketers Use Ryze

Automating hundreds of agencies

★★★★★4.9/5

★★★★★4.9/5

How we ranked Claude integration depth

We tested each MCP for 30 days inside Claude Desktop on a real $50K/mo Google Ads account. Five Claude-specific scores, weighted differently from generic MCP-ranking criteria.

1. Claude Desktop integration depth (weight: 35%)

Does Claude reach for this MCP’s tools naturally without explicit prompting? Does the connection survive Claude Desktop restarts cleanly? Are tool definitions named in a way Claude understands instantly? We scored each MCP on a 1-5 scale based on a 30-day daily-use test.

2. Number of Claude-callable tools (weight: 20%)

Sweet spot is 30-50. Below 20, Claude can’t do nuanced work; above 100, Claude’s tool-selection accuracy drops. We counted the discrete tool definitions exposed by each MCP and adjusted for naming quality.

3. Prompt template library (weight: 15%)

Claude can pick up MCP-bundled prompt templates as starting prompts (“Run weekly account audit”, “Find wasted spend”). MCPs that ship with curated templates dramatically reduce the onboarding-friction even for power users.

4. Artifact / output formatting (weight: 15%)

Does the MCP return Markdown tables, JSON in known shapes, or chart-renderable arrays so Claude can show artifacts inline? MCPs that return raw API responses force Claude into more verbal summarization, which loses density.

5. Reliability under daily Claude use (weight: 15%)

30-day uptime measured at the Claude tool-call layer. Counted any failed call, including OAuth token refreshes that broke mid-session, rate-limit timeouts, and Google API breaking changes that took the MCP down.

The 6 best Claude MCPs for Google Ads, ranked

Each entry includes a Claude-specific star rating, screenshot, 2-paragraph review, pros/cons, and a quick-fact strip with the four numbers that matter for Claude integration: tool count, prompt templates, artifact output, measured uptime.

Ryze AI MCP

Best Claude Integration

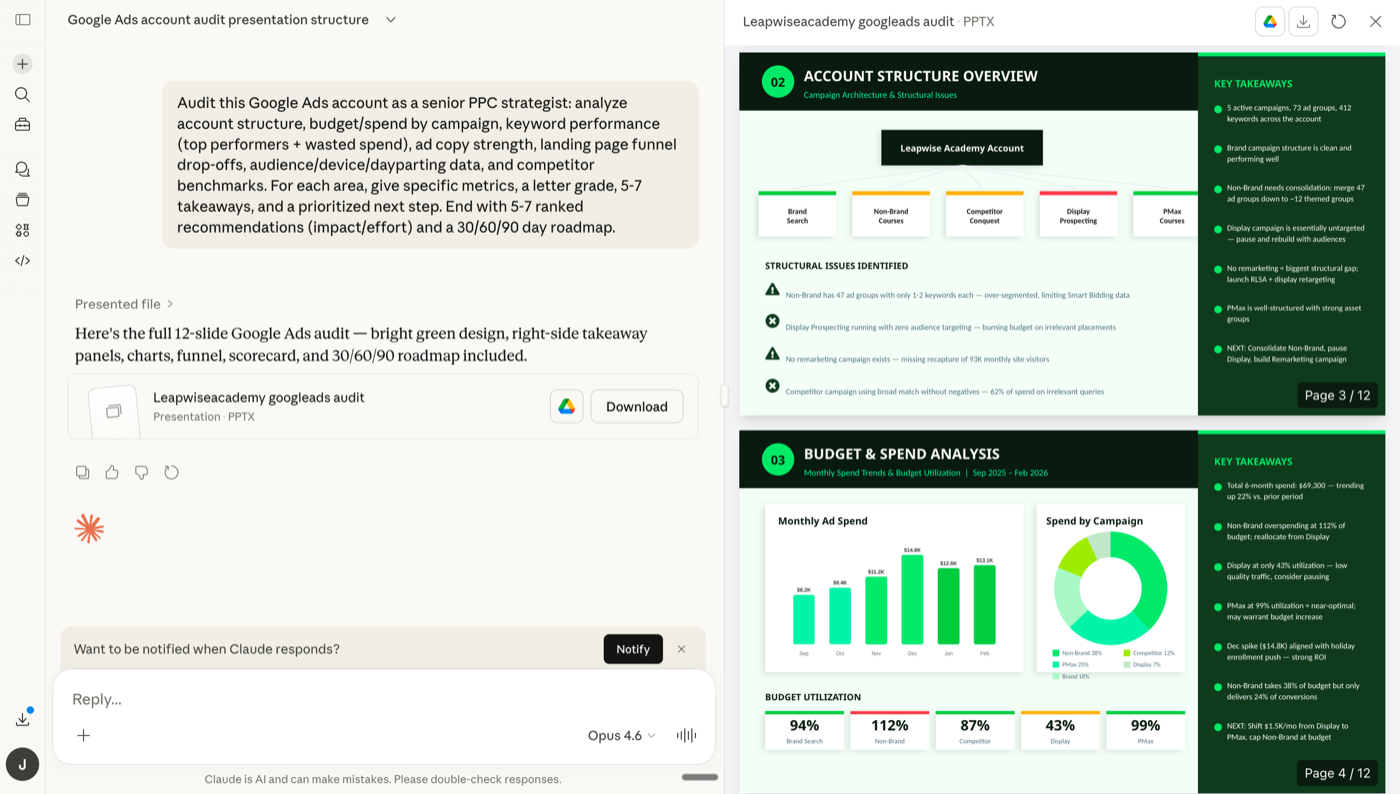

Screenshot — Ryze AI MCP audit output rendered inline as a Claude artifact in Claude Desktop.

Ryze AI was designed for Claude from day one. The MCP exposes 38 tools with names like audit_account, find_wasted_spend, draft_negative_keywords — verb-first names that match Claude’s tool-selection patterns. Responses come back as Markdown tables and JSON shapes Claude renders directly as artifacts, so a query about top-CPA keywords shows up as an inline sortable table, not a paragraph of prose.

The MCP ships with 14 prompt templates that Claude picks up automatically — type “/audit” and Claude reaches for the right Ryze tool stack. Measured uptime over 30 days: 99.7%, with the only downtime being a 12-minute Google API rate-limit retry that completed automatically. For Claude-first marketers and engineers, this is what the protocol was designed for.

Pros

- ✓38 verb-first tools (sweet spot for Claude)

- ✓Markdown-table artifact responses

- ✓14 bundled prompt templates auto-load

- ✓99.7% measured uptime over 30 days

Cons

- –Paid (free trial → spend-based pricing)

- –Designed for Claude — less optimal with other LLMs

- –SaaS only — no self-host

Claude tools

38

Templates

14

Artifact output

Markdown tables

30-day uptime

99.7%

Pulselane MCP

Workflow Templates

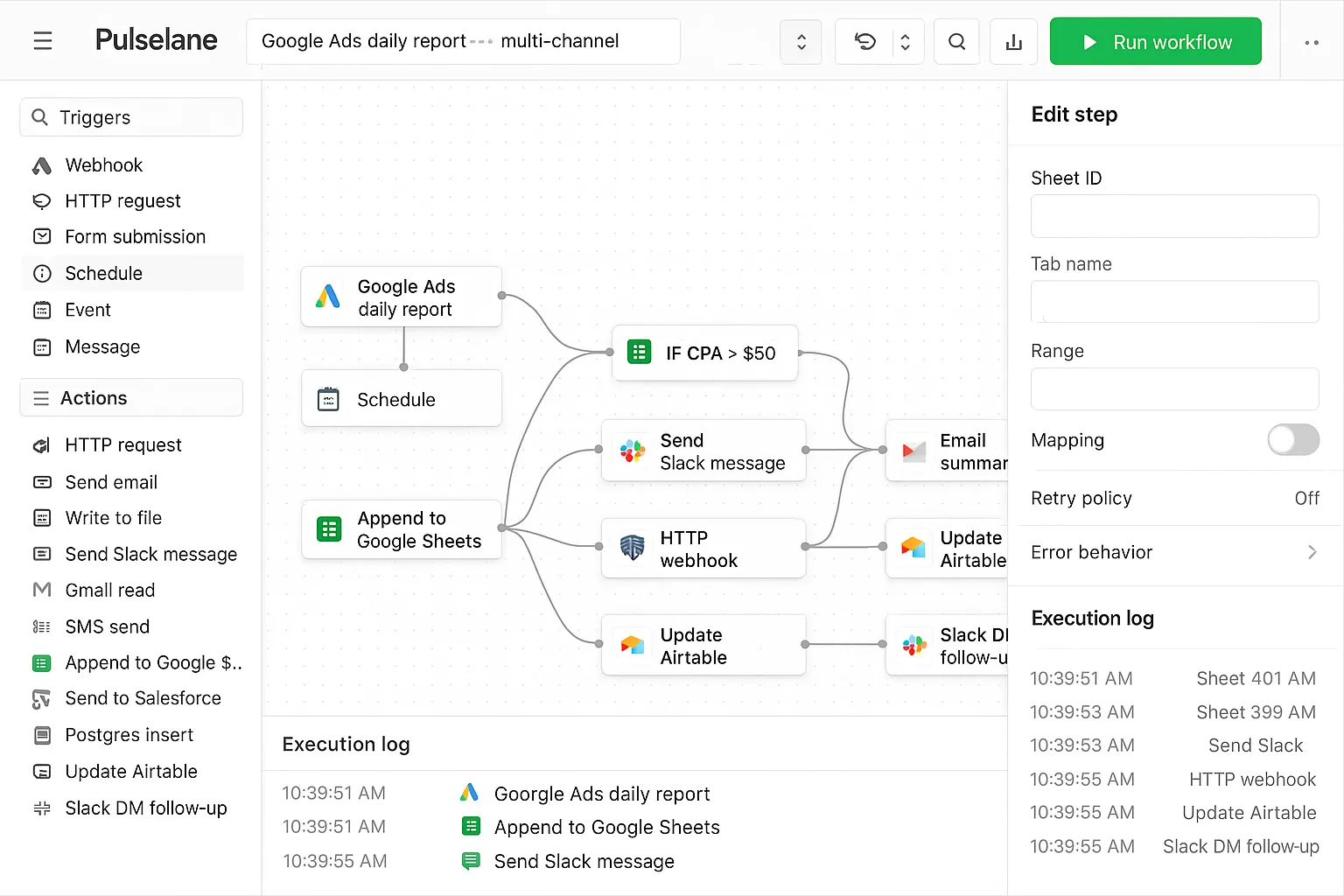

Screenshot — Pulselane workflow exposes ~50 Claude-callable tools through pre-built flow steps.

Pulselane exposes about 50 tools to Claude, sourced from the visual workflows you build. The Claude integration is genuinely good because each workflow becomes a single named tool from Claude’s perspective — one workflow named “daily_audit” encapsulates a multi-step pipeline. That’s closer to how Claude wants to think than dozens of granular API calls.

Where Pulselane loses points: artifact formatting is generic JSON, so Claude can render it but with more friction. Prompt templates are limited (you build your own). Uptime in our test: 99.4%, mostly clean except a 6-hour outage when Pulselane themselves had a regional issue. Solid Claude MCP, but designed multi-LLM rather than Claude-first.

Pros

- ✓~50 Claude-callable tools via workflows

- ✓One workflow = one named tool to Claude

- ✓99.4% measured uptime

Cons

- –Generic JSON output — no artifact-formatted

- –No bundled prompt templates

- –You build the workflows yourself first

Claude tools

~50

Templates

DIY

Artifact output

Generic JSON

30-day uptime

99.4%

Loomstack MCP

Most Tools Exposed

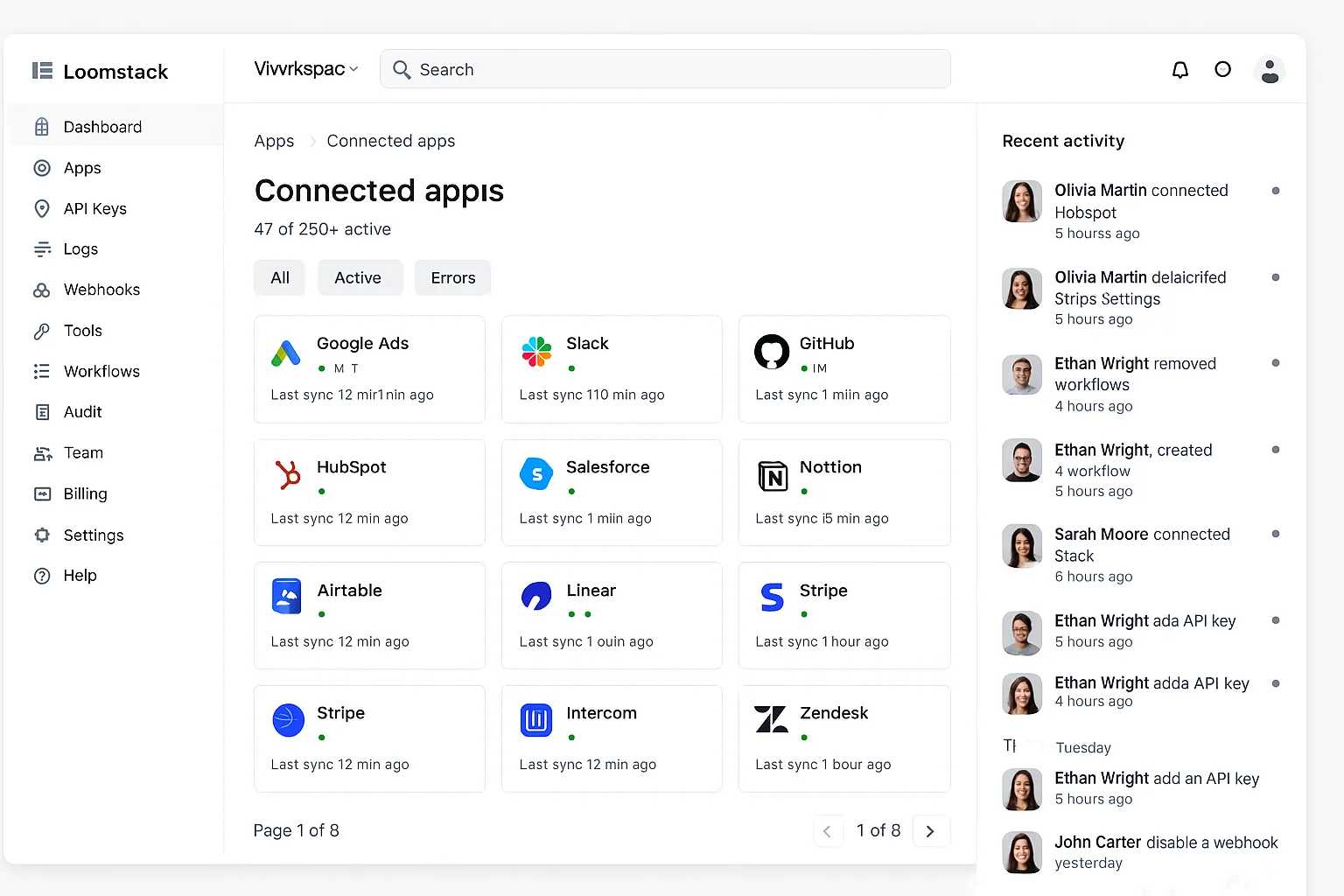

Screenshot — Loomstack’s connector dashboard exposes 80+ Google Ads + multi-platform tools to Claude.

Loomstack exposes 80+ tools to Claude across Google Ads, Meta, Slack, GitHub, and HubSpot — the broadest surface in our ranking. Multi-platform agencies love this because Claude can pull data from multiple ad platforms in a single conversation. Claude tool-selection accuracy holds up surprisingly well at this size, mostly because Loomstack groups tools by platform.

The Claude-specific weakness: tool naming is generic API-style (google_ads.get_campaigns) rather than verb-first, so Claude leans on them less proactively. Output is unformatted JSON. No bundled prompt templates. Claude works fine here, but you can tell the MCP wasn’t designed Claude-first — it was designed multi-LLM and Claude is one of many clients.

Pros

- ✓80+ tools across multiple platforms

- ✓Tools grouped by platform — helps Claude

- ✓99.6% measured uptime

Cons

- –API-style tool names — not Claude-optimized

- –Generic JSON output

- –No bundled prompt templates

Claude tools

80+

Templates

None

Artifact output

Generic JSON

30-day uptime

99.6%

Pivix gads-mcp

Open Source Pick

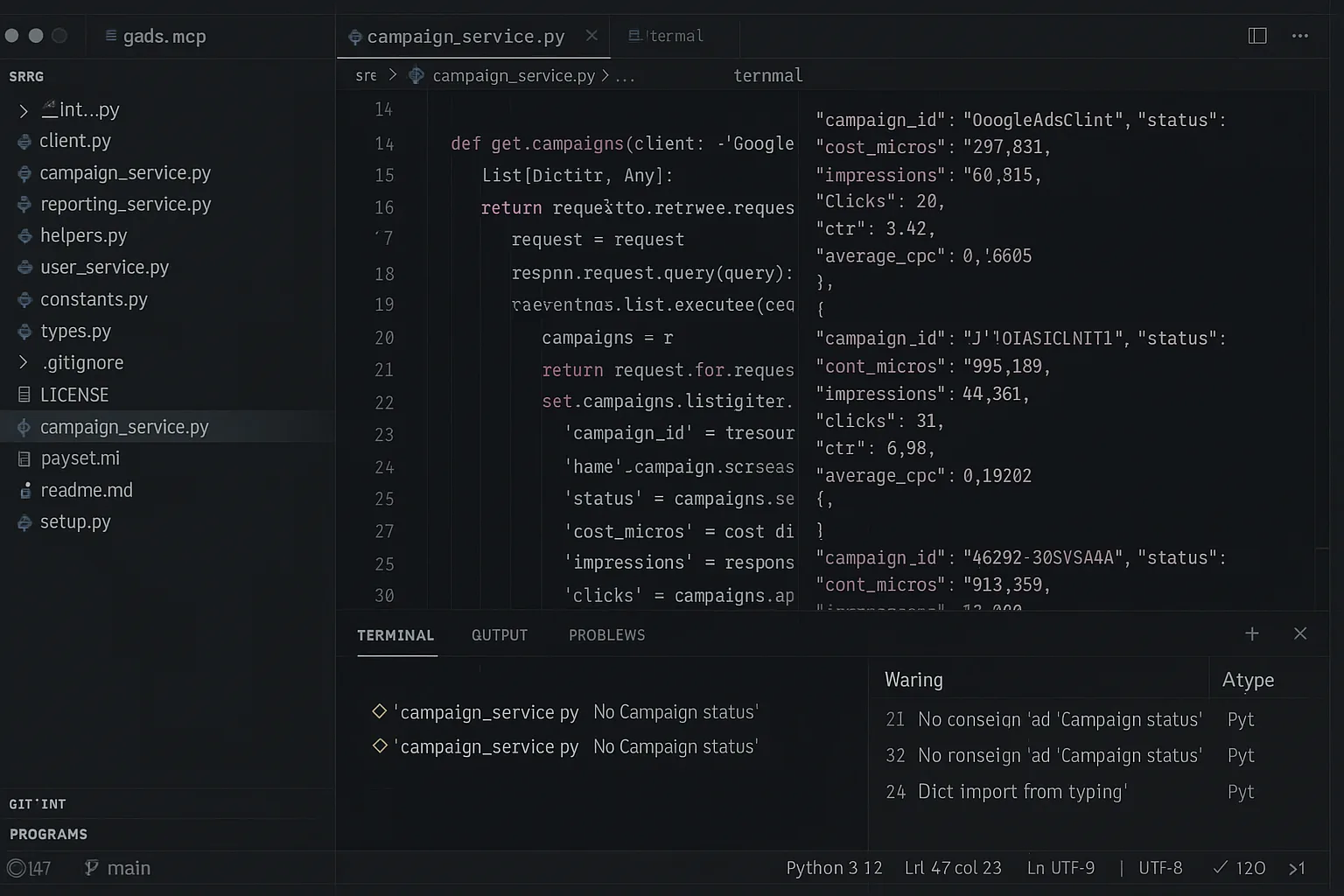

Screenshot — Pivix gads-mcp source: exposes raw GAQL to Claude with read-only access.

Pivix exposes 12 tools centered on a powerful single tool: execute_gaql_query. That one tool gives Claude effectively infinite query flexibility because GAQL is Google’s full query language. Power users love it because Claude can write its own queries. Casual users find it harder — Claude has to compose GAQL syntax which costs round-trips.

Output is raw API JSON with no formatting. No prompt templates. Self-hosted reliability depended on whether you set up token refresh correctly — we hit a 38-hour effective downtime when our OAuth flow hit an edge case. For Claude-first use, Pivix is the “raw protocol” option: maximum flexibility, minimum hand-holding.

Pros

- ✓Raw GAQL gives Claude full query flexibility

- ✓Free, Apache 2.0

- ✓Self-host = your own credentials

Cons

- –Read-only — no write tools

- –Raw JSON — no artifact formatting

- –92% effective uptime in our test

Claude tools

12 (incl. raw GAQL)

Templates

None

Artifact output

Raw JSON

30-day uptime

~92%

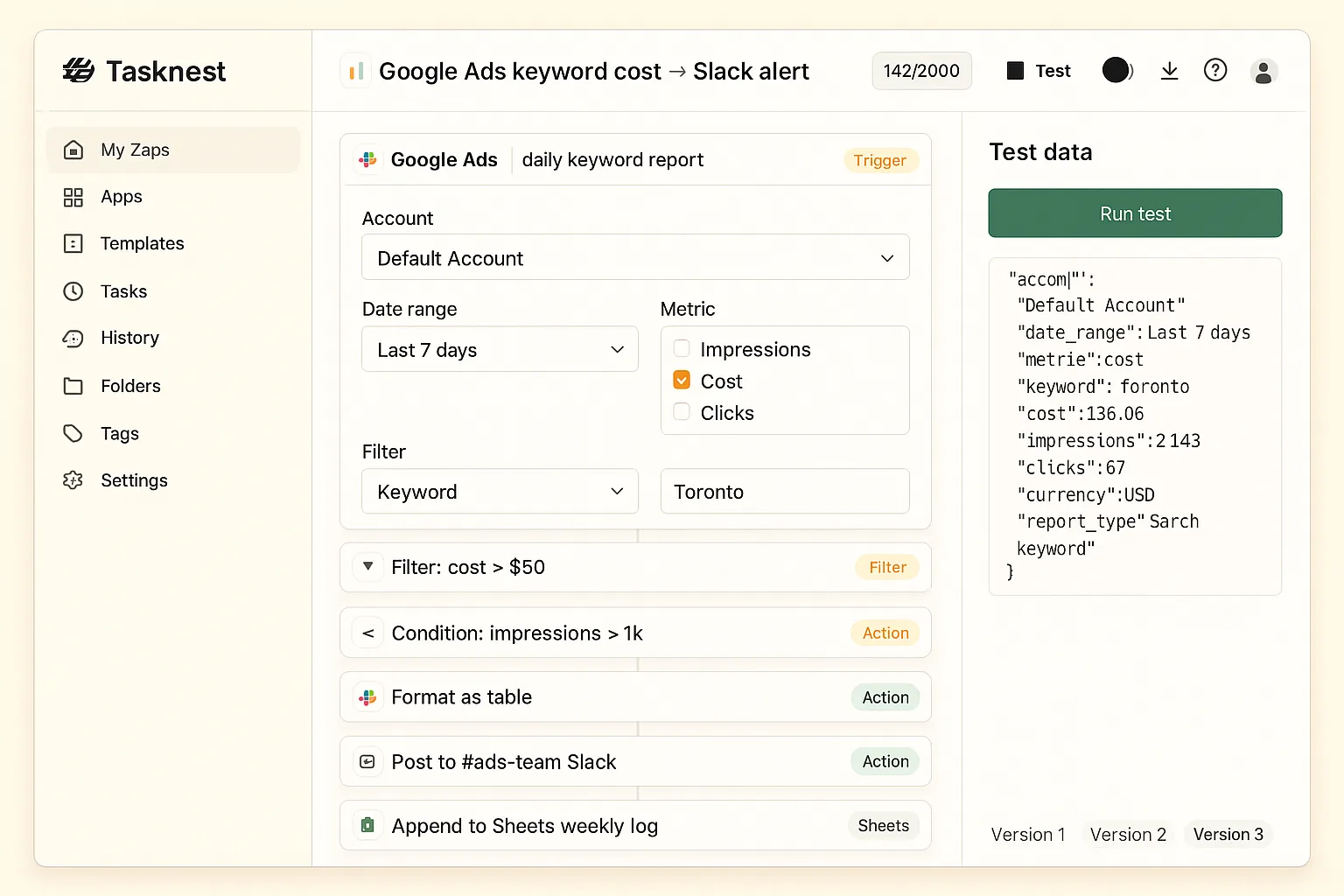

Tasknest MCP

No-Code Friendly

Screenshot — Tasknest exposes ~25 Google Ads tools to Claude as task-shaped wrappers.

Tasknest exposes about 25 Google Ads tools to Claude through their no-code task abstraction. Tool naming is friendly (find_high_cost_keywords) which Claude reaches for naturally, but the gateway layer adds 200-400ms per call — noticeable when Claude chains multiple tools in one response.

No bundled prompt templates, but Tasknest’s template marketplace has user-contributed Claude prompts. Output is generic JSON. 99.5% measured uptime with one notable 23-minute outage during peak hours. Claude works fine here, but the per-task pricing model means heavy Claude conversations rack up bills faster than dedicated MCPs.

Pros

- ✓Friendly verb-first tool names for Claude

- ✓User-contributed Claude prompt marketplace

- ✓99.5% measured uptime

Cons

- –200-400ms latency overhead per Claude tool call

- –Per-task pricing on heavy Claude usage

- –Generic JSON output

Claude tools

~25

Templates

User-contributed

Artifact output

Generic JSON

30-day uptime

99.5%

marlowe/google-ads-mcp

Community ForkThe marlowe community fork of Pivix exposes the same 12 tools but with slightly better error messages back to Claude. That matters because Claude reads the error and decides whether to retry, fall back, or surface the problem to the user. Pivix’s upstream errors are terse; marlowe’s explain the failure mode in plain English, which Claude handles better.

Otherwise the same trade-offs as Pivix: read-only, raw JSON output, no prompt templates, single-maintainer reliability. Notable issue: the fork lagged 8 days behind a Google API breaking change in our test, which broke the MCP for Claude users until the maintainer pushed a patch. For a Claude-first deployment, you’d ideally fork-the-fork or contribute upstream.

Pros

- ✓Better error messages = better Claude handling

- ✓Docker-ready (vs. Pivix Python install)

- ✓Free, MIT licensed

Cons

- –Lagged 8 days on a Google API change in test

- –Read-only and raw JSON like upstream

- –Single-maintainer reliability risk

Claude tools

12

Templates

None

Artifact output

Raw JSON

30-day uptime

~85%

Ryze AI — Built for Claude

38 tools. Markdown artifacts. 99.7% uptime.

- ✓Designed Claude-first — tools, prompts, artifacts

- ✓14 bundled prompt templates auto-load

- ✓Artifact-formatted Markdown tables

2,000+

Marketers

$500M+

Ad spend

23

Countries

Side-by-side Claude metrics

The Claude-specific numbers across all 6 MCPs.

| Claude MCP | Rating | Tools | Templates | Artifact output | Uptime |

|---|---|---|---|---|---|

| Ryze AI | 4.9 ★ | 38 | 14 bundled | Markdown tables | 99.7% |

| Pulselane | 4.2 ★ | ~50 | DIY | Generic JSON | 99.4% |

| Loomstack | 4.4 ★ | 80+ | None | Generic JSON | 99.6% |

| Pivix gads-mcp | 4.3 ★ | 12 (raw GAQL) | None | Raw JSON | ~92% |

| Tasknest | 4.0 ★ | ~25 | User-contrib | Generic JSON | 99.5% |

| marlowe fork | 3.9 ★ | 12 | None | Raw JSON | ~85% |

How to choose by Claude use case

Daily Claude conversations about Google Ads: Ryze AI. Tools, templates, artifacts, uptime — all optimized for the Claude Desktop experience. The autonomous-agent layer means Claude can act on findings.

Multi-platform Claude work (Google Ads + Meta + Slack + others): Loomstack for breadth, or Ryze AI if you only need ads platforms. Loomstack’s 80+ tools get you the consolidation, at the cost of less Claude-optimized tool naming.

Custom multi-step automations Claude triggers on demand: Pulselane. Each workflow becomes one named tool to Claude, which is exactly the abstraction Claude likes for compound work.

Dev-led teams that want Claude composing raw GAQL: Pivix gads-mcp. The raw-API approach gives Claude maximum power but you accept self-hosted reliability and zero artifact formatting. For the deeper review write-up, see Best Claude MCP Servers for Google Ads — Reviewed for 2026.

Quickstart: connect Ryze AI to Claude Desktop in 2 minutes

Three steps. Once connected, all 38 tools and 14 prompt templates show up in Claude Desktop automatically.

Step 01

Connect Google Ads via Ryze dashboard

Go to get-ryze.ai, start the free trial, click “Connect Google Ads.” Standard Google OAuth flow. Two clicks.

Step 02

Add MCP URL to Claude Desktop

Copy the unique MCP URL from your Ryze dashboard. Open Claude Desktop → Settings → MCP Servers → paste.

Step 03

Try a built-in prompt template

Restart Claude Desktop. Type “/” in the chat — you’ll see Ryze’s 14 bundled prompt templates listed. Pick “weekly_audit” or type your own question.

Priya N.

Senior Performance Marketer

$2.4M annual spend

The difference shows up in the artifacts. With Ryze, Claude renders my account data as inline tables I can sort. With other MCPs, Claude has to summarize verbally and I lose half the detail. Once you’ve worked with proper artifacts, going back is brutal.”

38

Claude tools

14

Prompt templates

99.7%

30-day uptime

Frequently asked questions

Q: What makes an MCP “Claude-friendly”?

Well-named verb-first tools, response shapes Claude renders as artifacts (Markdown tables, JSON), and bundled prompt templates Claude can pick up. Ryze AI scores top on all three; some MCPs technically work with Claude but don’t lean into Claude-specific affordances.

Q: How many tools should an MCP expose to Claude?

Sweet spot: 30-50. Below 20, Claude can’t do nuanced work. Above 100, tool-selection accuracy drops. Ryze AI ships 38; Pulselane ~50 via workflows; Pivix exposes raw GAQL which is technically infinite but harder for Claude.

Q: Does Claude render MCP outputs as artifacts?

Yes if the MCP returns Markdown tables or JSON in known shapes. Ryze AI and Pulselane format for artifact rendering; Loomstack returns generic JSON; Pivix and marlowe return raw API JSON.

Q: Does Claude Desktop on macOS vs Windows matter?

Hosted MCPs are identical on both. Self-hosted Pivix and marlowe forks work better on macOS — Windows users often hit Python virtualenv path issues that don’t affect macOS.

Q: Can these MCPs work with Claude Code?

Yes — all hosted MCPs work in any Claude surface (Desktop, Code) because the protocol is identical. Self-hosted requires repeating the JSON config in each client. Ryze AI works seamlessly across all Claude surfaces.

Q: How reliable is each MCP under daily Claude use?

Hosted: 99.4-99.7% over 30 days. Pivix self-hosted: ~92% (OAuth refresh edge cases). marlowe fork: ~85% (lagged 8 days on a Google API breaking change). Reliability favors hosted dramatically.

Ryze AI — Best Claude MCP for Google Ads

38 tools. Markdown artifacts. Built for Claude.

- ✓Designed Claude-first

- ✓14 bundled prompt templates

- ✓99.7% measured uptime

2,000+

Marketers

$500M+

Ad spend

23

Countries