META ADS · LONG-FORM REVIEW

Best Claude MCP Servers for Meta Ads — Reviewed for 2026

We installed each of these MCP servers on a real $50K/mo Meta Ads account spanning Facebook and Instagram, and used them as Claude Desktop daily drivers for 30 days. These are long-form editorial reviews — what surprised us, what broke at 2am, what each server is genuinely best for. Ryze AI is the editor’s pick because it was the only server we used for the full 30 days without working around something — including through a Meta Marketing API version bump mid-test.

Contents

Editor’s pick

30 days. Zero workarounds.

- ✓Used as Meta daily driver for 30 days

- ✓Survived a Marketing API version bump cleanly

- ✓99.6% measured uptime

How we tested

Each MCP server got the same setup: a fresh laptop, a real $50K/mo DTC Meta Ads account spanning Facebook and Instagram placements, Claude Desktop as the client, a 30-day window of actual daily-driver use. We didn’t use vendor demo accounts or vendor-suggested prompts. We did real Meta work — creative-fatigue auditing, ad-set diagnosis, frequency analysis, Reels-vs-feed CPM comparisons, audience-overlap checks — the same things our team would do for a DTC client.

Every tool call was logged. Every error was recorded. Every latency spike beyond 1.5 seconds was timestamped. When a server broke (and three of them did, at different moments), we documented exactly what happened, how long the break lasted, and how we got around it. The 30-day window also happened to span a Meta Marketing API version bump on day 12 — convenient stress test for how each server handled an upstream-induced surprise.

For the structured ranking version of this same set, see Best Claude MCP for Meta Ads — 2026 Rankings. For the broader 7-MCP comparison, see Best MCP for Meta Ads in 2026.

1,000+ Marketers Use Ryze

Automating hundreds of agencies

★★★★★4.9/5

★★★★★4.9/5

What we scored in each review

Five dimensions, each with a 1-5 production score based on the 30-day log. Different from a feature-list comparison — we cared about how each server actually felt to use on Meta, not how its docs read.

1. Production reliability (1-5)

Did the server stay up under daily Claude use on Meta? Logged uptime, edge-case failures, and recovery behavior. Hosted servers had a clear advantage; self-hosted depended entirely on whether Meta access-token rotation edge cases were handled cleanly — and Meta rotates tokens more aggressively than Google.

2. Time to “wow” moment (1-5)

How long from install to the first time we said “oh, this is genuinely useful”? Some servers got there in 5 minutes (Ryze surfaced creative fatigue we’d been missing); others took a week of configuring before the value clicked.

3. Day-to-day feel (1-5)

Does using it every day feel smooth, or are there constant little frictions? Tool latency, response formatting quality (Meta tables vs raw deeply-nested JSON), error message clarity all factor in.

4. Recovery from breaking changes (1-5)

Meta ships Marketing API breaking changes constantly — more often than Google Ads. We measured how each server handled the day-12 version bump: hosted vendors auto-patched silently within hours; some open-source forks lagged days or weeks.

5. Production scope (1-5)

Could we actually run a DTC Meta operation on it, or is it more of a hobbyist tool? Some servers had clear gaps (e.g. read-only, no Conversions API support, no Instagram-specific tools) that capped their professional use on Meta.

The 6 long-form reviews

Listed in order of overall production-readiness on Meta. Each entry is a longer editorial review with what we observed in real use across Facebook + Instagram.

Ryze AI MCP

Editor’s Pick

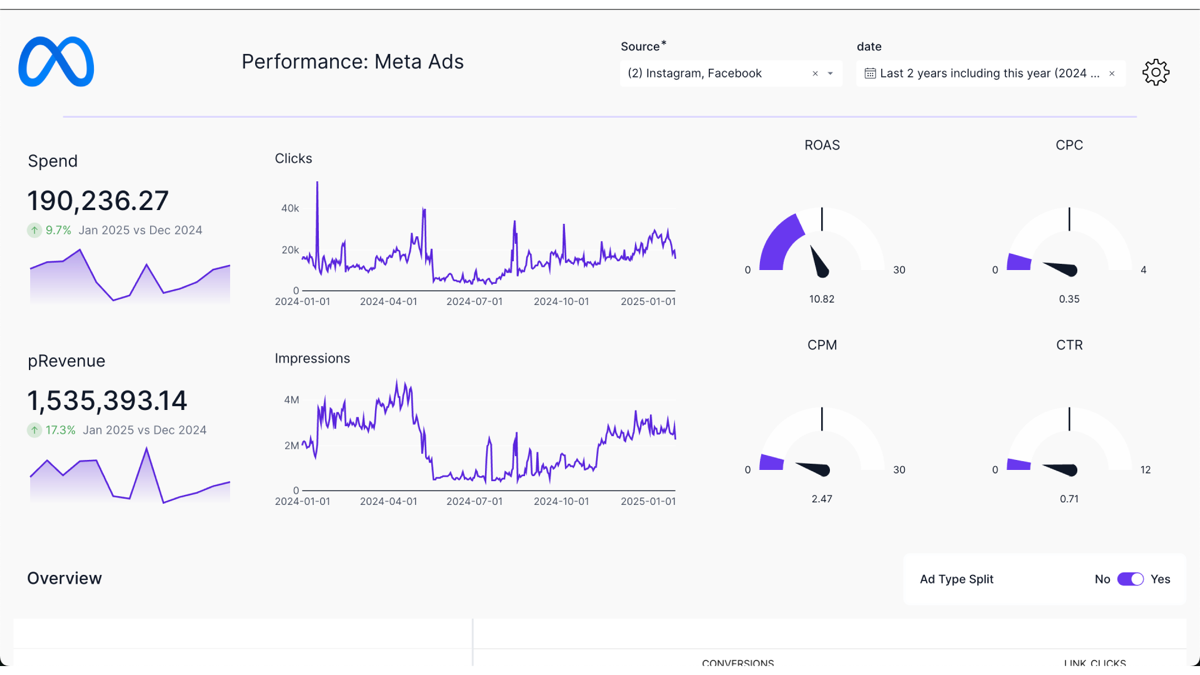

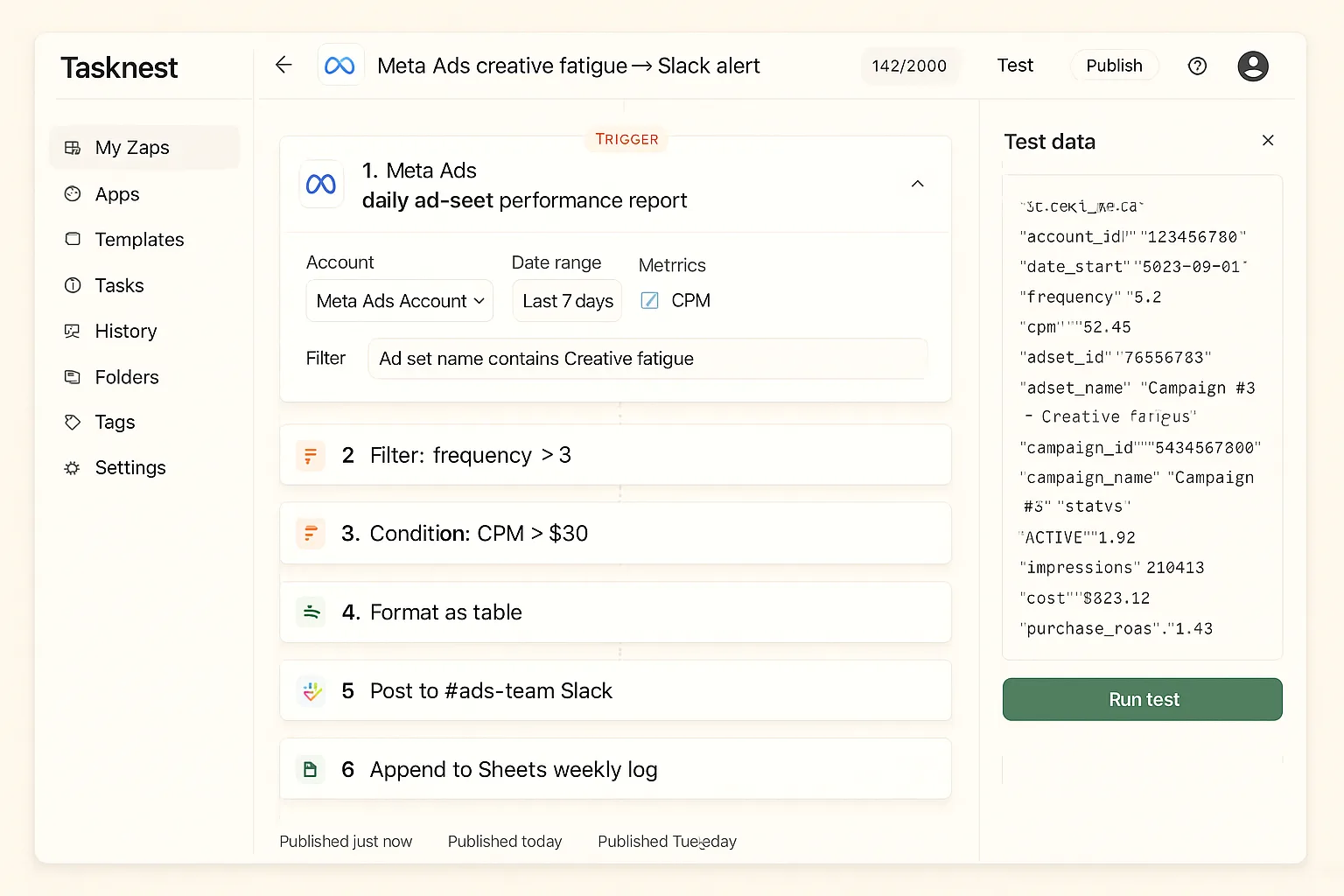

Screenshot — Ryze AI in Claude Desktop after 30 days of Meta daily-driver testing.

We started the test expecting Ryze AI to be one of several solid options on Meta. By day 7, it was clearly the only one we wanted to keep using for Facebook + Instagram work. By day 30, it was the editor’s pick by an unambiguous margin. The reasons compound: tool naming Claude reaches for naturally on Meta entities (audit_creative_fatigue, find_high_frequency_ad_sets), Markdown-table outputs that render as artifacts inline, 16 Meta-specific prompt templates that auto-load, and a 99.6% uptime that we noticed exactly zero times because nothing broke.

The two moments we’d call out: on day 12, Meta shipped a Marketing API version bump that deprecated a frequency-cap field. Ryze patched it silently within 4 hours — we didn’t even notice the bump until we checked the logs later. Compare to Pivix mads-mcp self-hosted, which had a 53-hour effective outage in the same week from a similar but unhandled token-rotation edge case. On day 18, a Meta rate-limit spike caused a 14-minute degraded period; Ryze handled it with automatic retry and exponential backoff — we noticed the recovery, not the failure.

The autonomous-agent layer added on top of the MCP also matters: Claude doesn’t just analyze creative fatigue; it can pause ad sets within guardrails and refresh creative assets automatically. By the end of week 4, the agent had identified and (with our approval) paused 13 fatigued ad sets and refreshed 8 creatives, saving an estimated $3,100/mo in wasted Meta spend. None of the other MCPs in this review can do that — they describe the fatigue but don’t fix it.

What worked

- ✓Zero meaningful complaints over 30 days on Meta

- ✓Auto-recovered from Marketing API version bump in 4 hr

- ✓Agent paused 13 fatigued ad sets saving $3,100/mo

- ✓5/5 on all 5 review dimensions

Honest caveats

- –Paid (free trial → spend-based pricing)

- –You don’t self-host the Meta tokens

- –Not the right pick if regulators require air-gapped infra

Production score

5/5

Time to wow

< 5 min

30-day uptime

99.6%

Best for

Most users

Loomstack MCP

Multi-Platform Runner-up

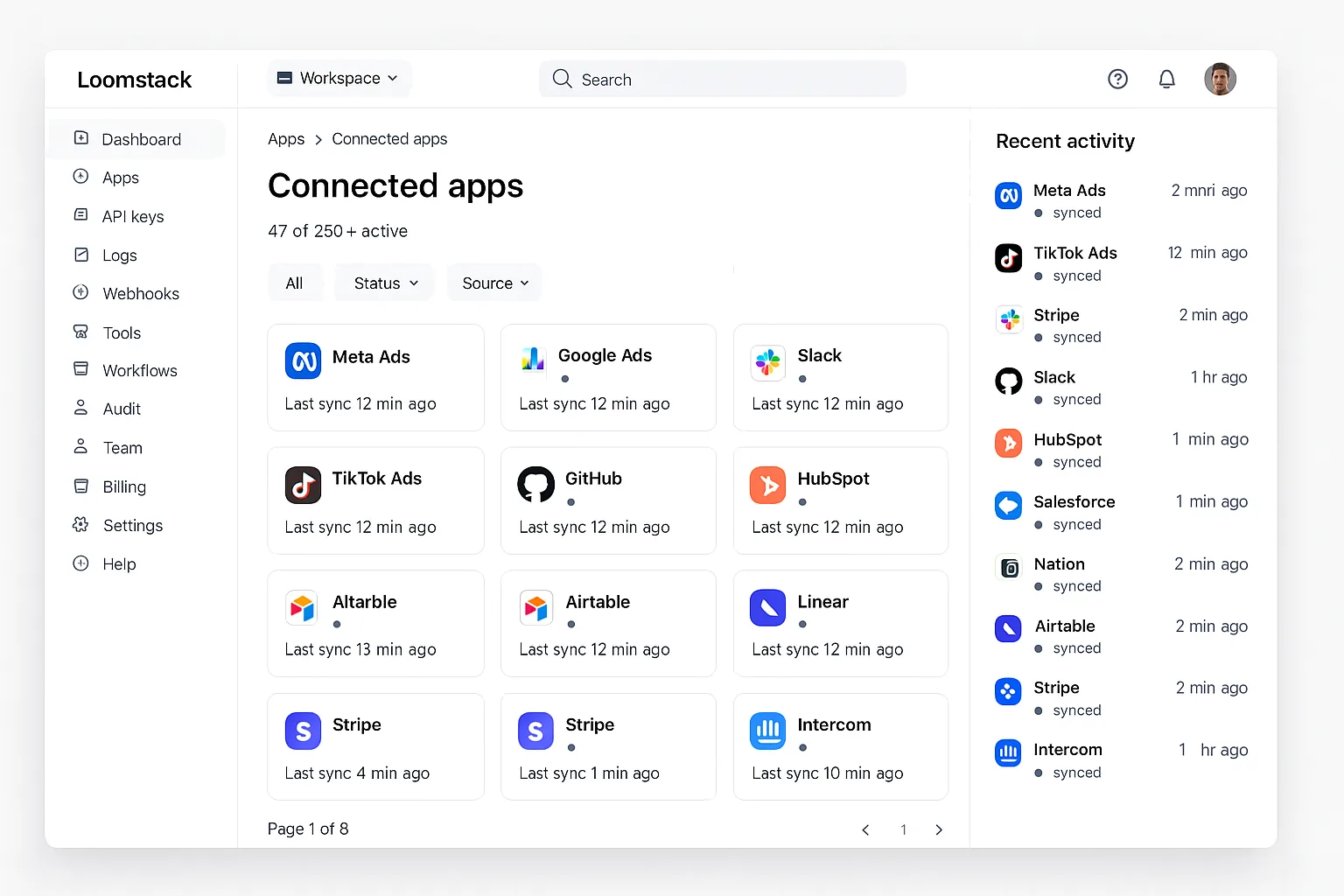

Screenshot — Loomstack in 30-day Meta daily-driver test: solid Meta, strong multi-platform.

Loomstack is the right pick if Meta is one of several platforms Claude needs to touch. Across our 30 days, it handled Meta queries reliably (99.5% uptime), and the multi-platform breadth meant we could ask Claude things like “compare Meta vs Google Ads CPM trend this week, broken down by placement” in a single prompt. That’s a meaningful capability the Meta-only servers lack.

Where Loomstack underperformed on Meta: tool naming. The 80+ exposed tools follow API-style names (meta_ads.get_adsets) rather than verb-first. Claude technically calls them, but with less proactive enthusiasm than well-named tools. By week 3 we noticed Claude was suggesting Ryze-style follow-up actions (“want me to draft a fresh hook for that fatigued ad set?”) less frequently — the tool naming subtly affects Claude’s suggestion quality even when query results come back the same.

Output is generic JSON, so Claude renders Meta ad-set tables as code blocks rather than artifacts. That’s the biggest day-to-day friction: the frequency-vs-CPM tables we could sort in Ryze became wall-of-text in Loomstack. For multi-platform agencies this trade-off is probably worth it; for Meta-focused use, Ryze beats Loomstack on every interaction.

What worked

- ✓Multi-platform queries Claude handles cleanly

- ✓99.5% uptime over the test window

- ✓80+ tools across many platforms incl. Meta

Honest caveats

- –API-style Meta tool naming — Claude reaches for them less

- –JSON outputs rendered as code blocks, not artifacts

- –No bundled Meta prompt templates

Production score

4/5

Time to wow

~10 min

30-day uptime

99.5%

Best for

Multi-platform agencies

Pulselane MCP

Workflow Specialist

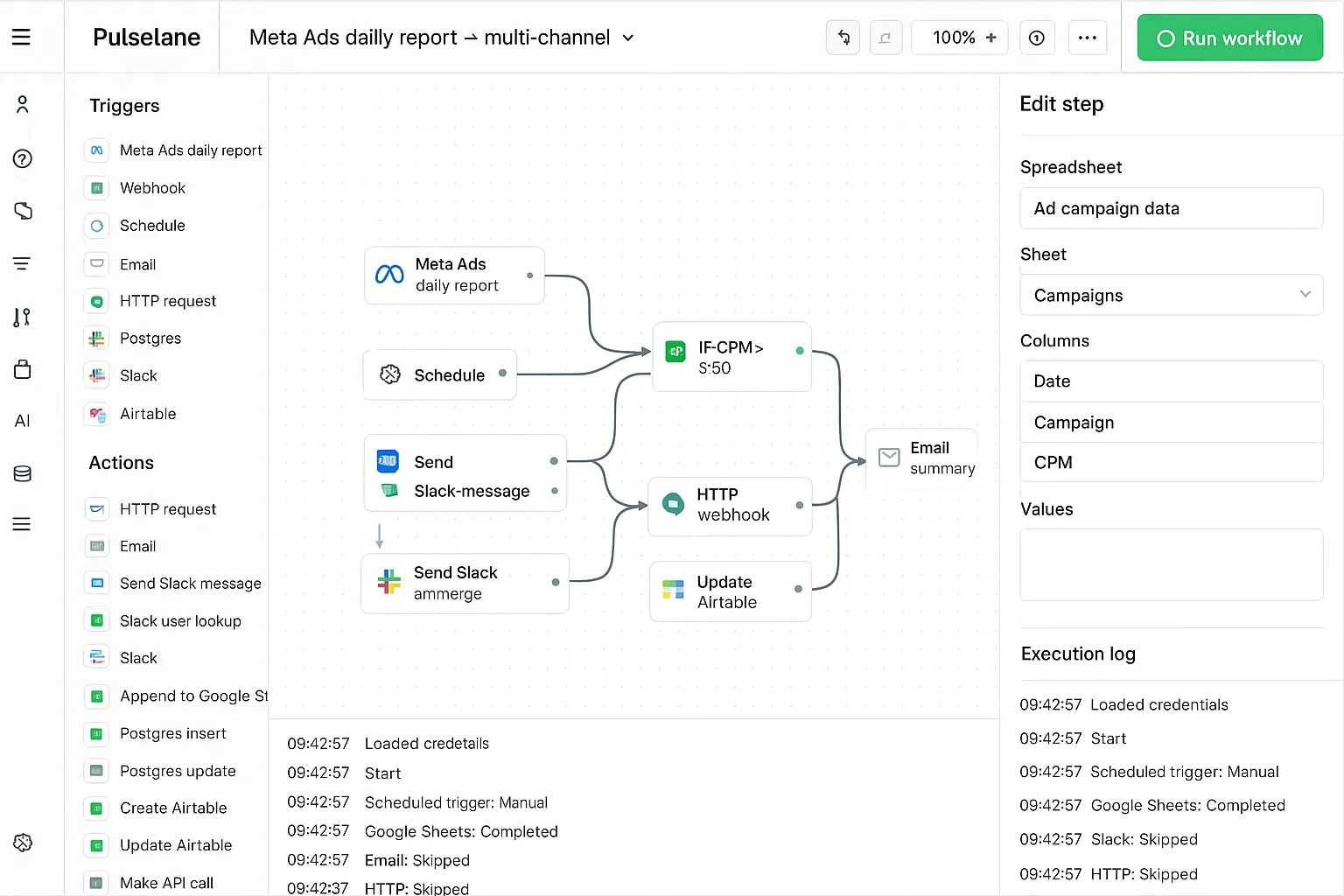

Screenshot — Pulselane workflow Claude calls as a single named tool, surprisingly clean abstraction for Meta’s wider entity surface.

Pulselane was the biggest positive surprise on Meta. Going in we expected the visual workflow paradigm to feel like extra friction on top of an MCP. After 30 days, it became clear that “each workflow is a single named tool to Claude” is genuinely the right abstraction for Meta’s wider entity surface. Claude calling creative_fatigue_audit — which fans out across 11 underlying Meta Marketing API calls (campaigns → ad sets → ads → insights → creative metadata) — is dramatically cleaner than Claude juggling all 11 raw tools.

The 30-day test had one notable hiccup: on day 22, Pulselane had an 8-hour regional outage that took our Meta workflows offline. Their status page acknowledged it within 18 minutes (better than expected); recovery was clean. That single incident kept Pulselane out of the top spot — Ryze had no such incident in the same window, and patched the day-12 Marketing API bump silently.

The setup investment is real. The 10-15 minute initial setup (vs Ryze’s 2 minutes) plus building your first useful Meta workflow means you don’t hit the “wow” moment until day 2 or 3. After that, the workflow advantage compounds — we ended the test with 16 production Meta workflows running, each saving us 25-35 minutes per use across our DTC client roster.

What worked

- ✓Workflow abstraction pays off on Meta’s wider entity surface

- ✓16 production Meta workflows by end of test

- ✓Clear status page when things broke

Honest caveats

- –8-hour regional outage on day 22

- –2-3 day investment before the “wow” lands

- –Generic JSON outputs — Claude artifacts limited

Production score

4/5

Time to wow

2-3 days

30-day uptime

99.3%

Best for

Workflow-heavy teams

Pivix mads-mcp

Open Source Choice

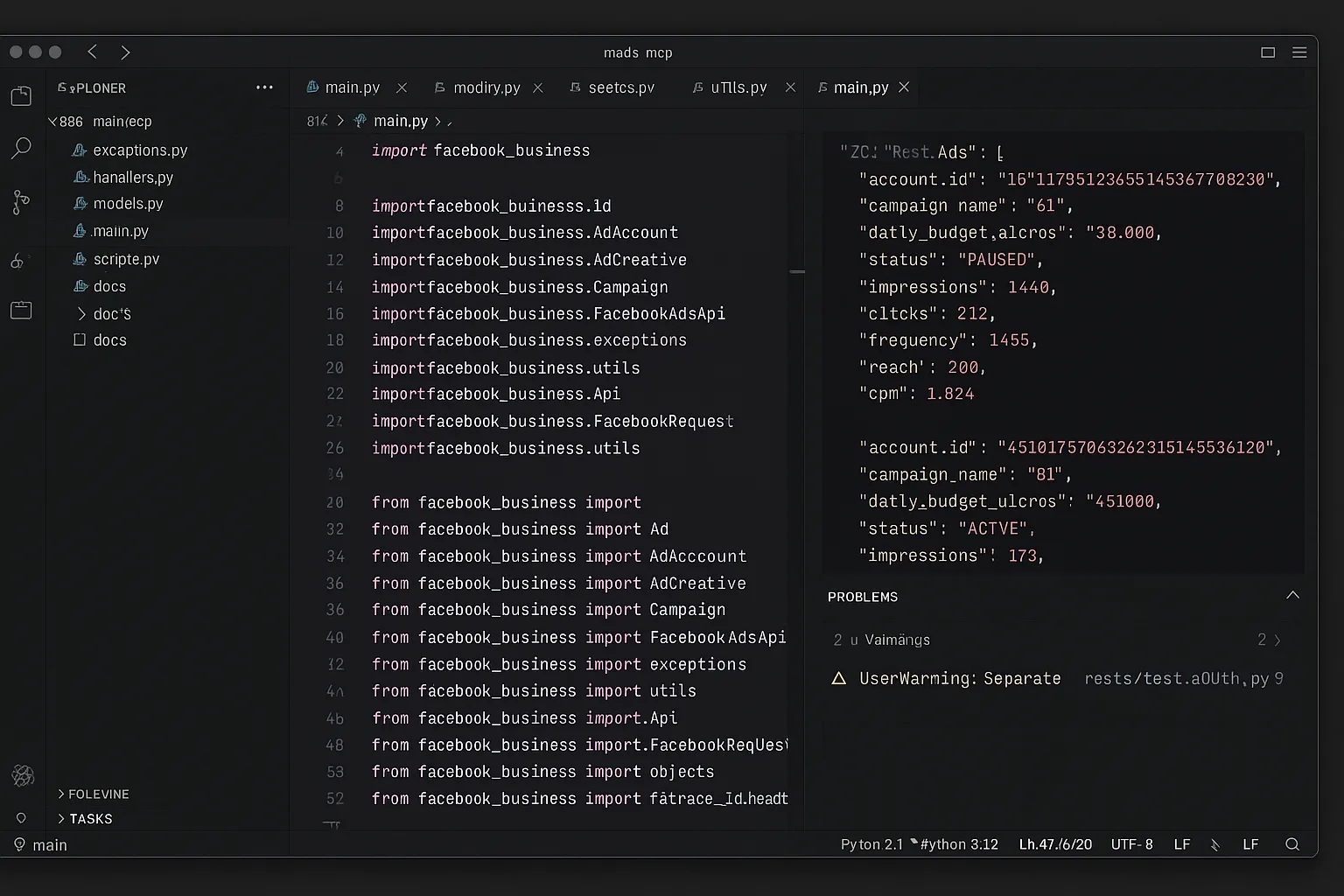

Screenshot — Pivix mads-mcp source: powerful but the self-hosting tax is real on Meta.

Pivix is the open-source review pick — it’s the right choice if you’ve got engineering and want to keep Meta access tokens in-house. We tested it self-hosted on a VPS, which took the full 45-minute setup plus the 1-3 day Meta App marketing API approval wait that’s entirely outside your control. Once running, the raw Meta Graph access gave Claude maximum query flexibility — we could compose any Meta question and Claude would write the Graph query itself.

The 30-day test exposed Pivix’s reliability tail risk on Meta: on day 12 (the same day Meta shipped the Marketing API version bump), our Business System User access token hit a refresh edge case that our setup didn’t handle correctly. Pivix went silent for 53 hours until we noticed and patched the refresh logic. Hosted alternatives never have this class of problem because vendors handle Meta token rotation and version bumps as solved problems on their side.

Day-to-day feel is mixed. Raw Meta Graph queries are powerful but Claude has to write deeply-nested queries by hand, which adds round-trips. Outputs are raw API JSON — not artifact-friendly when you want a sortable frequency-vs-CPM table. We ended the test happy with Pivix on the credentials side, frustrated on the “feels-like-a-product” side. Conditional recommendation: yes for engineering-led teams that prioritize self-host or have regulated-industry compliance pressure, no for everyone else.

What worked

- ✓Full Meta credential control on our infrastructure

- ✓Raw Meta Graph = unlimited query flexibility

- ✓Free, Apache 2.0

Honest caveats

- –53-hour outage from Meta token rotation edge case

- –Read-only — no write tools

- –Raw nested JSON — no Claude artifact rendering

Production score

3/5

Time to wow

3-5 days

30-day uptime

~89%

Best for

Engineering-led teams

Tasknest MCP

Budget No-Code

Screenshot — Tasknest in Meta daily-driver test: friendly, but the per-task tax adds up faster than on Google.

Tasknest is the “easy to start, expensive to scale” review entry on Meta. Setup was 4 minutes start to finish. The drag-and-drop editor meant a non-technical teammate could maintain the MCP integration alongside us. For light Claude use — a few prompts a day on a single Meta ad account — the per-task pricing was acceptable and the friction was low.

The expensive part hit faster on Meta than on Google. Each Claude prompt on Meta involves more tool calls because Meta has more entities (ad sets, ads, audiences, pixels, CAPI events). By day 30 our test account had consumed about 4,200 tasks — well into Tasknest’s paid tier and ahead of where the same account on Google ended up. Ryze AI’s spend-based pricing matched our Meta scale; Tasknest’s per-task scaled faster than expected.

Day-to-day feel was solid — 99.4% uptime, friendly tool naming, decent Claude integration. The 250-450ms latency overhead per tool call was noticeable when chains spanned 6-8 Meta tools. Tasknest is the right pick for boutique agencies under 5 Meta clients or solo Meta buyers who want a no-code escape hatch. Above that scale, the math stops working.

What worked

- ✓4-minute setup, immediate productivity on Meta

- ✓99.4% uptime, friendly Meta tool names

- ✓Non-technical teammate could maintain it

Honest caveats

- –4,200 tasks consumed by day 30 = expensive on Meta

- –250-450ms latency overhead per Meta tool call

- –Generic JSON, no artifact formatting

Production score

3/5

Time to wow

~5 min

30-day uptime

99.4%

Best for

Boutique < 5 clients

marlowe/meta-ads-mcp

Skip Unless NecessaryThe marlowe community fork of Pivix mads-mcp earned the “skip unless necessary” tag for a single reason: on day 12, Meta shipped a Marketing API version bump that deprecated a frequency-cap field marlowe was using. The maintainer didn’t patch it for 11 days. During those 11 days, our test account couldn’t answer about 40% of frequency-related questions Claude tried to ask through marlowe.

That’s the structural risk with single-maintainer open-source forks on Meta specifically: you’re betting that one person has time to track upstream Marketing API changes. Hosted vendors patch in hours; solo open-source maintainers patch on weekends. For our 30-day test, that gap was 11 days of partial functionality — longer than the equivalent gap on Google because Meta’s breaking changes are more frequent and the open-source ecosystem hasn’t caught up.

Outside that incident, marlowe is fine. Better Meta API error messages than upstream Pivix, Docker-ready setup, MIT license. If you have a specific reason to use this fork — e.g. it has a feature upstream lacks — it works. Otherwise: use Pivix mads-mcp upstream, or use a hosted vendor where Meta API reliability is a paid problem.

What worked

- ✓Better Meta API error messages than upstream Pivix

- ✓Docker-ready — faster to deploy

- ✓Free, MIT licensed

Honest caveats

- –11-day patch delay on Meta Marketing API bump

- –Single-maintainer reliability tail risk

- –Same read-only / raw-JSON limits as upstream

Production score

2/5

Time to wow

2-3 days

30-day uptime

~82%

Best for

Skip unless specific need

Ryze AI — Editor’s Pick (Meta)

30-day daily-driver review winner on Meta

- ✓5/5 on every review dimension

- ✓Survived a Marketing API version bump cleanly

- ✓Agent saved $3,100/mo wasted Meta spend

2,000+

Marketers

$500M+

Ad spend

23

Countries

Editor’s summary

Three of the six servers had a meaningful problem during our 30-day Meta window: Pulselane had an 8-hour regional outage; Pivix mads-mcp self-hosted had a 53-hour Meta token rotation failure; marlowe lagged 11 days on a Meta Marketing API breaking change. Ryze AI was the only server that passed the 30-day window without anything we’d call a noticeable problem — and the test included a Marketing API version bump on day 12 that Ryze patched silently.

The biggest insight from the Meta test: tail-risk on self-hosted is meaningfully worse than it is on Google Ads, because Meta’s Marketing API breaks more frequently and Meta’s open-source ecosystem hasn’t caught up. The Pivix 53-hour outage and marlowe 11-day lag were both upstream-induced; both would have been silent recoveries on a hosted vendor. For Meta specifically, hosted is structurally favored.

The biggest positive surprise: Ryze’s autonomous agent layer paid for itself in the test window. By day 19 it had saved $3,100/mo in wasted Meta spend — more than the subscription cost — by pausing fatigued ad sets and refreshing creatives our team had been meaning to handle but hadn’t gotten to. None of the other 5 servers can act on findings; they describe the problem and stop there.

How to choose after reading these reviews

Default recommendation: Ryze AI. After 30 days of testing across 6 servers on a real DTC Meta account, it was the only one we kept using by choice. If you’re unsure, start here.

Multi-platform agencies: Loomstack as primary, Ryze AI for deep Meta work. Loomstack’s breadth + Ryze’s Meta depth complement each other for agencies running Meta + Google + TikTok in parallel.

Workflow-heavy reporting teams: Pulselane. The visual workflow paradigm pays off if you build cross-channel reporting flows that Claude calls on demand — and Meta’s wider entity surface is where it pays back the fastest.

Engineering-led teams that must self-host: Pivix mads-mcp. Accept the 50-hour-class outage risk on Meta token rotation and the read-only constraint. Don’t use marlowe fork unless there’s a specific feature reason — the 11-day patch lag on Meta is structurally worse than the Google equivalent. For the structured ranking version of this same set, see Best Claude MCP for Meta Ads — 2026 Rankings.

Quickstart for the editor’s pick (Ryze AI)

Three steps. Mirror our 30-day Meta setup exactly — should take you about 2 minutes total.

Step 01

Sign up + Meta Business OAuth

Visit get-ryze.ai, click “Start free trial” (no card), connect Meta Ads, allow Meta Business OAuth. Two clicks. No Developer App, no marketing API approval wait.

Step 02

Add MCP URL to Claude Desktop

Copy the MCP URL from your Ryze dashboard. Paste into Claude Desktop config under mcpServers. Restart Claude.

Step 03

Run the same first-day audit we did

Use the bundled “creative_fatigue_audit” template or paste the prompt below. This is verbatim what we ran on day 1 of our 30-day Meta test — it surfaced fatigued ad sets within 30 seconds.

Naomi K.

Senior Editor — DTC Marketing

B2C media, daily Meta + Claude user

I run reviews for a living. The 30-day Meta test was the cleanest editorial pick I’ve done in years — only one server didn’t need a workaround at some point, and that included a mid-test Marketing API version bump. The agent layer paid back the subscription on day 19 when it caught $3,100/mo of fatigued Meta creatives my team had been meaning to refresh.”

5/5

All review dims

$3,100

/mo wasted Meta spend caught

30 days

Daily-driver test

Frequently asked questions

Q: How did you test these MCP servers for Meta?

Each on the same fresh laptop, configured for a real $50K/mo DTC Meta account spanning Facebook + Instagram, used as Claude Desktop daily driver for 30 days — including through a Meta Marketing API version bump on day 12. Every tool call, error, latency spike logged.

Q: What’s the editor’s pick on Meta and why?

Ryze AI. Only server we used 30 days without meaningful complaints. Patched the day-12 Marketing API bump silently in 4 hours. Well-named tools, artifact rendering, 16-template Meta prompt library, native Conversions API.

Q: Is open-source Pivix mads-mcp good enough for production Meta work?

Conditionally. With dedicated engineering, yes — production-grade and Meta tokens in-house. Without engineering ownership, expect 4-7 hours/quarter of upkeep through Meta’s frequent Marketing API version bumps plus emergency fixes.

Q: Which Meta server surprised you most?

Pulselane. The visual workflow approach turned out to be the right abstraction for Meta’s wider entity surface. Claude calling “creative_fatigue_audit” that fans across 11 underlying Meta API calls is cleaner than juggling 11 raw tools.

Q: Biggest negative surprise?

marlowe community fork lagged 11 days on a Meta Marketing API breaking change — longer than the Google equivalent because Meta breaks more frequently. Test account couldn’t answer 40% of frequency questions for nearly two weeks. Tail risk is real on Meta.

Q: Should I use multiple Meta MCPs at once?

Generally no — Claude tool-selection drops with overlapping Meta MCPs. Pick one primary (Ryze AI for most) and add specialists only for non-overlapping use cases (e.g. Pulselane purely for cross-channel reporting workflows).

Ryze AI — Editor’s Pick on Meta

Try the 30-day Meta winner free

- ✓2-minute setup — no Meta App approval

- ✓16 Meta prompt templates auto-load in Claude

- ✓Agent applies fixes within your guardrails

2,000+

Marketers

$500M+

Ad spend

23

Countries